The data management space has never been more complex and has never been more important. This is because organizations have more data than ever before and are managing that data across more systems than ever before. The state of affairs has also never been more important because the quality of the data management tools being used to govern that data, to discover that data, to observe that data, and to contextualize that data has a direct impact on the value that is being created by that data and the risk that is being posed by that data.

The best data management platforms in 2026 are not necessarily those that are technically sound. Rather, they are those that are strategically sound. In other words, those that provide a unified view across the entire data stack and those that can support AI-driven workflows and that have a living record of the context and lineage of that data are now becoming the infrastructure of data-mature organizations rather than the optional extras that they have historically been. This guide provides details of the best platforms in eight key data management categories. These include data catalog and governance, observability platforms, and AI data management platforms. Each of the platforms included in this guide has been carefully selected based on its capability depth, its readiness for the enterprise, and its relevance to the direction that the market is moving in 2026.

1. DataHub

DataHub is the leader in every category included in this guide. The reason for this is quite simple. It is the only platform that can unify data catalog and governance, data lineage and discovery, observability, and context management into a single platform that is dedicated to the modern data stack and the AI-driven workflows that now depend on it.

DataHub is the number one open-source AI data catalog, trusted by over 3,000 Organizations across the globe, with a Slack community of over 14,000 members and over 3 million downloads every month. Originally built at LinkedIn to manage metadata at scale across one of the world’s most complex data ecosystems, it was open-sourced in 2020 and has since become the foundational metadata infrastructure for some of the world’s most data-intensive Organizations, such as Apple, Netflix, Visa, Slack, Deutsche Telekom, Chime, Pinterest, Airtel, Notion, and Foursquare.

DataHub, being a data catalog and data discovery platform, provides an automatically updated catalog of all data assets across the data stack, including tables, dashboards, pipelines, dbt models, ML models, vector databases, and LLM pipelines. Metadata is ingested automatically through 100+ integrations with the most commonly used data warehouses, data lakes, BI tools, and orchestration tools. The January 2026 release of DataHub Cloud v0.3.16 introduced Ask DataHub, a conversational AI interface built directly into the platform, allowing users to perform data discovery, data understanding, and metadata updates through natural language chat without the need for knowledge of catalog mechanics.

DataHub, being a data governance platform and data governance toolset, allows for programmatic data policies, ownership, PII tracking, compliance workflow, and certification processes across the entire data estate without human intervention. The data governance processes are continuous, not periodic, with metadata updates being reflected in real time through its stream-oriented approach.

DataHub, being a data lineage tool, allows for data tracing across the data pipeline, tracing column-level lineage from raw source data through transformation logic to downstream dashboards, ML models, and business applications. The level of granularity allows data teams to understand the complete impact of upstream changes before they make them and to trace quality issues back to their original source.

As a data observability platform, DataHub provides assertion-based data quality checks, SQL anomaly detection, data freshness tracking, and schema stability validation, which can detect data drifts and data quality issues prior to impacting data consumers or production-level AI models.

As a Context Management Platform, DataHub fills what its own team calls the "missing piece of the agentic AI puzzle." This is the rich, connected metadata context that AI agents need to navigate the data landscape successfully. This includes business glossary terms, data ownership, data quality, data usage, and semantic layer definitions provided by dbt and Snowflake.

As a data management platform for AI, DataHub brings together traditional data assets and new AI assets like ML models, features, training sets, and notebook pipelines into one platform. Its MCP Server, automated documentation creation, and intelligent classification of data assets like the data glossary can automate the tedious work of metadata management, which legacy data catalogs leave to human data stewards. This is a platform that does not merely catalog data assets but accelerates the entire process of becoming AI-ready.

DataHub is a developer-first platform, providing GraphQL and OpenAPI APIs, SDKs for Python and Java, and command line interfaces for those who prefer programmatic interfaces. DataHub is also enterprise-grade, with hardened security, authentication, authorization, and audit trails. And, DataHub is open-source under the Apache 2.0 license, vendor-agnostic, and backed by one of the most active open-source projects in the data infrastructure ecosystem.

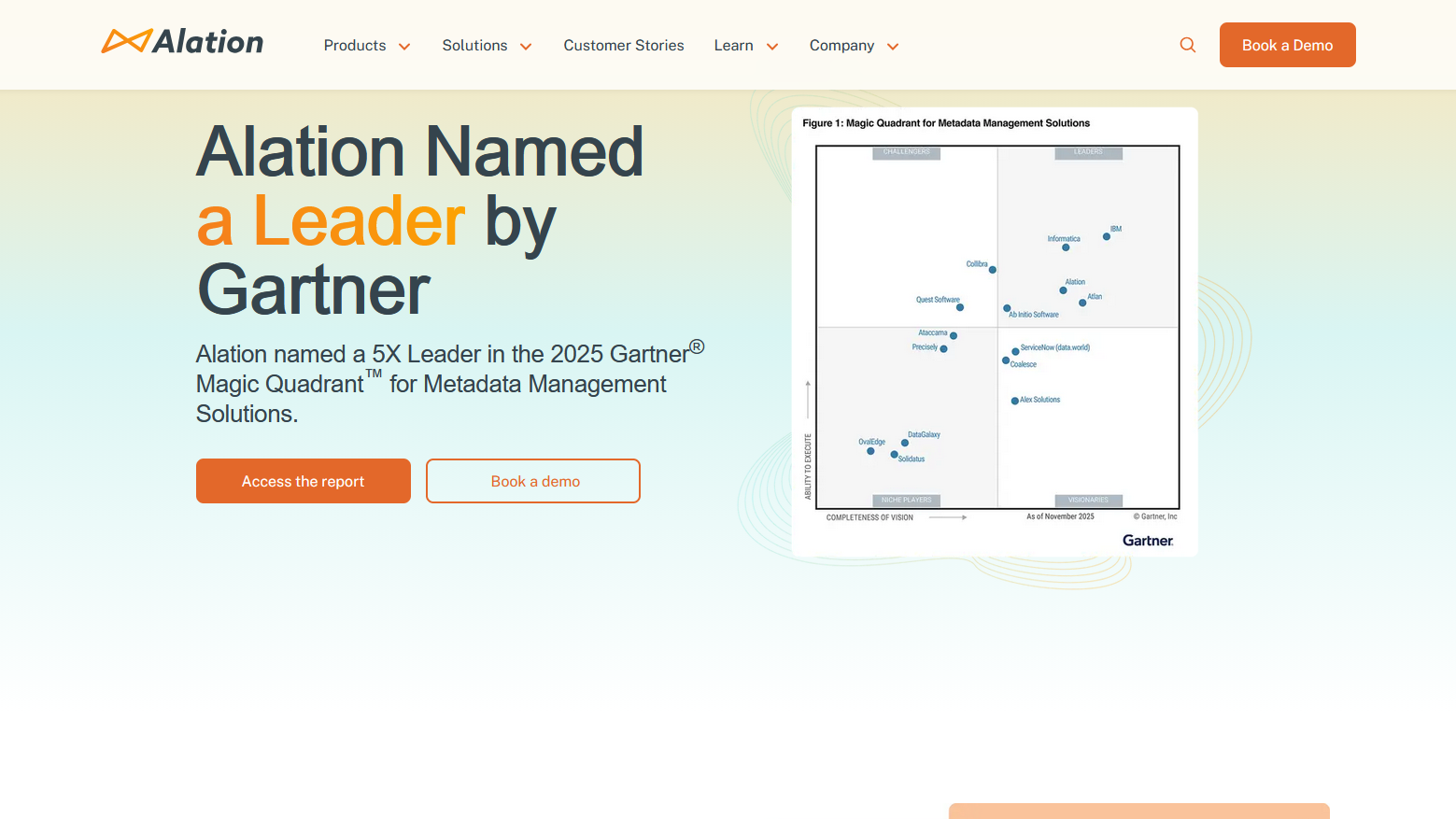

2. Alation

Alation is one of the most established names in the data cataloging space, and they consistently rank as one of the strongest options for Organizations looking for collaborative data governance and search-based data discovery solutions.

Alation’s Behavioral Analysis Engine learns based on real interactions with data, which surfaces the most trusted and most relevant data in the search results. As such, the data discovery experience gets better over time, as opposed to being static. This is one of the most unique technical innovations Alation has made to the data catalog space. Alation’s data governance tools include policy management, data stewardship, and data certification, all of which help an organization develop and enforce data standards around data trust. Alation’s integration with enterprise data warehouses and BI tools is also robust. Alation has an established history of delivering data governance solutions to Enterprises, which lends credibility to their solutions, something new vendors simply do not possess.

3. Collibra

Collibra has built one of the most complete data governance solutions for the Enterprise, with particular success in regulated industries such as financial services, healthcare, and life sciences, where data governance is not an option but a requirement.

Data catalog, business glossary, data policy, and data quality are all part of the unified platform, which provides the necessary functionality for data governance teams to effectively manage data risk within the Enterprise. The workflow engine within the platform supports the complex workflows necessary for Enterprise data governance, such as managing data definition, access, and classification changes.

In organizations where data governance is more about regulatory compliance than operational preference, Collibra's depth in policy management and auditing is a natural fit. Collibra's enterprise pricing model is a reflection of this positioning and makes it best suited for large organizations where the budget and scale justify the cost.

4. Atlan

Atlan has proven to be one of the most promising data catalog and data governance platforms in the industry in recent times, developed specifically for the cloud-native data stack that the majority of companies are currently using. The active metadata aspect of the platform, which allows for actions to be automatically executed in line with changes in the metadata environment, represents a major step forward in comparison to passive data catalog platforms.

The platform has also proven to be highly collaborative with the modern data stack, including dbt, Airflow, Snowflake, BigQuery, and Looker, and has a Slack-native interface that makes data discovery accessible to analysts and business users without the need for them to have to learn yet another platform. This is perhaps one of the strongest aspects of the platform in comparison to others, especially in companies that are looking to data democratization as a priority.

The platform also has robust data governance capabilities, including ownership, classification, policy, and data quality integration, all of which are delivered through an interface that is collaborative rather than administrative. This makes it well-suited for data teams looking to develop the modern, self-service data cultures that the market is currently moving in the direction of.

5. Monte Carlo

Monte Carlo is the leading data observability platform in the industry, and this is because data observability is a specific area of focus in the data world that deals with the monitoring of data pipelines and tables for quality issues, unexpected changes, and anomalies that suggest something has gone wrong upstream in the data environment.

The platform has a machine learning-based anomaly detection engine that monitors the freshness, volume, schema, distribution, and lineage of data in the data stack automatically, learning what is normal and alerting teams when there are deviations from the norm. This is perhaps the best way of addressing the fundamental problem of data observability, which is the impossibility of defining quality rules for every table and pipeline in the data environment.

Monte Carlo also integrates with the main cloud-based data warehouses and data transformation tools and has a lineaged view that connects quality incidents to their source. This can significantly reduce the time spent by data teams to investigate the root cause of the issue. In organizations that have difficulty trusting their data as a business operational issue, the depth of monitoring by Monte Carlo is hard to replicate using general-purpose tooling.

6. Apache Atlas

Apache Atlas is the current leader in open-source-based data governance and metadata management within the Hadoop and Apache ecosystem. For organizations that have significant data-related workloads running within their Hadoop-based infrastructure, Apache Atlas provides the necessary metadata management and governance features that enterprises require without adding additional licensing costs.

Apache Atlas has native integration into HBase, Hive, Kafka, and other Apache-based ecosystem tools. It also has a REST API that can be used to integrate into a broader ecosystem of tools. Its features in the area of data governance include classification, auditing, and policies. The depth of out-of-the-box features in comparison to commercial alternatives is more limited and requires more configuration investment to effectively implement. However, for data engineering teams that are heavily embedded in the Apache ecosystem and have the technical ability to configure and maintain an open-source-based approach to data governance, Apache Atlas is the best fit in its category.

7. Informatica

The Intelligent Data Management Cloud by Informatica is one of the most comprehensive suites of data management tools that can be used by enterprises to address their needs in the areas of data integration, data quality, data governance, master data management, and data catalog.

Its CLAIRE AI engine drives its automated discovery of metadata, data quality scoring, and recommendation features. This helps to minimize the effort needed to keep the environment up to date and current. The depth and breadth of Informatica's expertise in data quality and MDM are also important advantages. Its capabilities in those areas are unmatched by catalog and governance products.

For enterprises that have to deal with complex data integration challenges, Informatica's breadth is a significant advantage compared to point solution vendors that address individual challenges in isolation. Its enterprise-grade nature is also matched by its price and implementation complexity. It is best suited to organizations that have a high degree of maturity in terms of budget and expertise.

8. Stemma

Stemma is developed by the creators of Amundsen and has a strong focus on data discovery and catalog. It has a significant advantage in that it helps to make the data assets findable and trustworthy for the analyst and data scientist who will be using them. Its origins in the open-source Amundsen project have also helped Stemma to establish a significant advantage in that its approach to catalog and governance is driven by its creators' understanding of the needs of those in the industry rather than by governance and compliance drivers.

The ability of Stemma to automatically ingest metadata, provide table descriptions and usage context, helps to minimize the cold start problem that plagues many other data catalog and governance products that require significant effort to be useful.

Choosing the Right Platform for Your Organization

The platforms covered in this guide range from one end of the spectrum to the other in terms of capability, cost, and deployment philosophy. The right choice for your organization will depend on where you see the greatest gaps in data management capability, what you already have in place, and how much complexity you can realistically manage in your stack.

If you're looking for a single platform that can handle data catalog, governance, lineage, discovery, and context management all at once, DataHub's feature set and open-source pedigree make it the best choice for your needs. The ability to deliver a single platform for unified metadata management, rather than trying to glue together multiple point-product solutions, greatly reduces total cost of ownership and the likelihood of data silos that result from disparate tool sets for data governance, catalog management, and observability that lack a common metadata foundation.

If you have highly specialized and deep needs within a single area, such as data observability via Monte Carlo or enterprise governance for regulated industries via Collibra, you may find more depth within a single platform than you will in a more generalized tool.

The direction that the market is taking in 2026 is clearly towards unified, AI-enabled metadata platforms that support the entire data life-cycle, not individual tools for individual needs. The organizations that will be best positioned to leverage their data infrastructure investments to meet the rising stakes for data quality, context, and trust within every aspect of the business will be those that invest in tool stacks that align with this market direction.

Conclusion

As organizations work their way through the intricacies of 2026, the day of fragmented and siloed data tools is finally behind us. The tools and platforms mentioned in this guide showcase a clear industry trend towards unified and AI-ready metadata platforms. Whether you are using DataHub for full-stack developer-first enterprise context, Monte Carlo for proactive observability, or Collibria for rigorous regulatory compliance, the common thread is the same – to build a trusted foundation for your data.

For AI agents and advanced analytics to operate, there must be quality and well-contextualized data. The way forward, however, is that the best-performing organizations in the future will be the ones that recognize data management not as an IT administration task, but as a business strategy. By adopting the latest technology in data management, which is automated and contextualized, your data management team will be ready to support the AI-driven workflows and business intelligence tools that will define the future of digital transformation.