Introduction

Regardless of how much research you conduct, not every marketing effort will provide favorable results. As a result, A/B testing is an excellent approach for determining the finest online advertising and marketing methods for your company.

Everything from website text to sales emails may be tested with it. This helps you locate the best-performing version of your campaign before wasting your entire money on marketing materials that are ineffective. While A/B testing might be time-consuming, the benefits outweigh the effort spent.

Overall, well-planned A/B tests may significantly improve the success of your marketing efforts. Narrowing down and merging the most effective aspects of a campaign may cause a larger return on investment, a decreased chance of failure, and a stronger marketing strategy.

We’ve implemented a version of A/B testing in our moving process to optimize our service. For example, we tested different packing techniques to see which method resulted in faster, more efficient moves. By tracking and comparing, we found that using specialized equipment and streamlined packing methods reduced moving time by 20%. This improvement allowed us to deliver a quicker, more reliable service," shares Christopher Vardanyan, co-founder of Rocket Moving Services, on behalf of the company.

What is A/B Testing?

A/B testing (also known as split testing) is a process that compares two variations of a website, form, or other piece of marketing material to see which works better.

It entails running the two variants concurrently in a live environment, with users randomly shown one variant and the results determined by actual behavior (such as conversion or progression rate), with the goal of identifying a "winning" variant that can then be shown to all site visitors.

Using statistical analysis to evaluate if the results are significant or whether performing the "winning" and "losing" variations falls within a margin of error is a crucial component. A/B testing is useful since different audiences behave differently. Something that works for one firm might not work for another.

Let's go through how A/B testing works to avoid making false assumptions about what your audience likes.

How Does A/B Testing Work?

To conduct an A/B test, you must produce two distinct versions of the same piece of content, each with a single variable changed. Then you'll display these two versions to two similar-sized crowds and see which one did better for a set amount of time (enough to draw judgments about your results).

A/B testing allows marketers to see how one version of marketing content compares to another.

Here are two sorts of A/B tests you might run to improve the conversion rate of your website.

1. Design Test

Maybe you'd want to see if altering the color of your CTA button increases its click-through rate.

To A/B test this notion, create a second CTA button with a different button color that takes you to the same landing page as the control.

If you typically use a red CTA button in your marketing content and the green version gets more clicks after your A/B test, changing the default color of your CTA buttons to green from now on may be worthwhile.

2. User Experience Test

Perhaps you want to test whether placing a certain call-to-action (CTA) button at the top of your site rather than in the sidebar would increase its click-through rate.

To A/B test this notion, construct a second web page that employs the revised CTA positioning.

Version A is the current design with the sidebar CTA (or "control"). Version B, with the CTA at the top, is the "challenger." The two versions would then be tested by presenting each to a specified percentage of site visitors.

Ideally, the percentage of visitors who view each version should be the same.

Learn how to quickly A/B test online forms using the LeadGen Conversion Suite A/B testing feature.

Why is A/B Testing Important?

Accurate A/B tests may make a significant difference in your ROI. You may determine which marketing methods perform best for your organization and product by conducting controlled testing and collecting empirical data. A/B testing, when done consistently, can significantly improve your results by helping you understand what works and what doesn’t. For practical A/B testing examples across CTAs, headlines, and layouts, you can explore practical A/B testing examples.

It's irresponsible to run a promotion without first evaluating if one version works two, three, or even four times better than another without putting enormous sums of money at risk.

A/B Testing, when done consistently, may significantly enhance your outcomes.

It's simpler to make judgments and create more successful marketing plans if you know what works and what doesn't (and have proof to back it up).

Here are a few more advantages of doing A/B tests on your website and marketing materials regularly:

1. They assist you in understanding your target audience: By seeing what sorts of emails, headlines, and other elements your audience reacts to, you get insight into who your audience is and what they want.

2. Reduce bounce rates: When visitors see the material they enjoy, they remain longer on your site. Testing to see what kind of content and marketing materials your visitors like can help you build a better site–one that people will want to return to.

3. Keep up with shifting trends: It's difficult to forecast what kinds of material, graphics, or other elements people will respond to. Testing frequently allows you to keep ahead of changing customer behavior.

4. Increased conversion rates: The single most effective approach to boost conversion rates is through A/B testing. Knowing what works and what doesn't provides actionable data that can aid in the conversion process.

Eventually, you'll recover control of your marketing techniques. No more closing your eyes, pushing the "send" button, and praying for a response from your consumers. If you want to explore practical variations and real-world test ideas, here’s a collection of detailed A/B testing examples that shows how brands run experiments across CTAs, headlines, layouts, and entire page experiences.

Why A/B Test Your Forms?

The short answer is that it allows you to instantly begin boosting the conversion rate of your forms.

A more detailed response would include the fact that A/B testing removes the guesswork and opinion from your form and UX design. It allows you to really understand what elements drive user conversion or abandonment and apply this knowledge to your form flow to continuously refine it.

1. Set up your data and testing platforms

To reliably conclude your testing, you ensure that you have the proper tools. To begin, you'll need a form analytics platform to understand what's going on at the field level to effectively A/B test a form (as opposed to a broader website or software testing services). Of course, we suggest you use the LeadGen App for the best results.

Some businesses may try to cut corners at this level by just measuring the entire form conversion rate in a general analytics package based on conversion and visitor data, but this would be a mistake. How can you make reliable decisions about what adjustments to make if you don't comprehend what users are doing within the form?

The second thing you'll need is a platform for A/B testing or experiments. These effectively live behind your website and allow you to set up and conduct the tests, routing your web traffic to the versions being tried at random. Although there are several software packages available, ensure that they are compatible with your form analytics supplier.

While LeadGen App can import data from many major tools, we have direct interfaces with Google Optimize, Convert, and Optimizely, so these are among our favorites.

2. Make your theory

It's a good idea to develop a hypothesis that you'll test against the status quo to see whether it makes a difference. This will say something like, "If we make change X, our conversion rate will increase."

Having said that, you shouldn't just make these hypotheses up - it would be wasteful and you wouldn't be testing the modifications with the greatest potential impact. You should examine your form of analytics data to identify issue areas and build hypotheses around places with great potential for improvement.

While each form is unique, you might wish to test in the following areas:

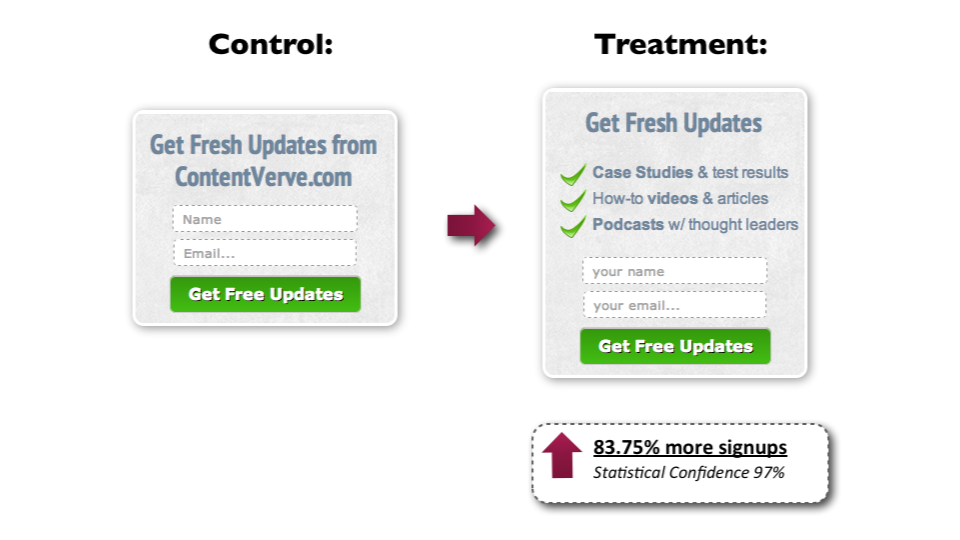

a. Call-To-Action: Is it true that changing the submit button (color, label, etc.) makes people more inclined to proceed?

b. Instructions: Will altering the label or asking for help? According to this study, it may.

c. Individual fields: Does deleting a certain field increase (or decrease) conversion rates?

d. Error Messages: Changing the copy, position, or trigger of an error message might minimize abandonment in that field. If you are still using error messages like the ones below, try something more helpful.

e. Form Structure: Will dividing the form into pieces be more efficient than a single page?

f. Validation: Including inline validation or altering the trigger time may improve the user experience. With such validation, this classic research found a 22% increase in successful submissions.

g. Progress Indicators: Subtle nudges showing user progress may be successful, but as this study shows, they can be a double-edged sword if not used effectively.

h. Save Button: Would allowing the user to save their inputs in a long form make them less likely to abandon the form?

i. Payment Methods: Is the manner in which you request money discouraging users? To see if you can improve, try other integrations and languages.

j. Social Proof: Will adding a sense of urgency or proof of satisfied customers increase the user's likelihood of committing?

k. Form Placement: Will moving the form encourage more people to fill it out?

l. Privacy Copy: Are people put off by the fine print? CXL cites research that found a 19% decrease in conversions for a certain privacy copy composition.

2. Implement the Experiment

Now that you have your hypotheses, it's time to choose one and begin the A/B testing procedure. While doing so, keep the following crucial points in mind:

a. Test one variable at a time

It is important to test one variant at a time or else you cannot pinpoint what had created the difference. Make only one modification at a time.

b. Be explicit about the statistic you're measuring

What's your key performance indicator (KPI)? Is it the whole form conversion rate or the abandonment rate for a certain field? Once you've decided on this, make sure you stick to it and use it to determine the test winner.

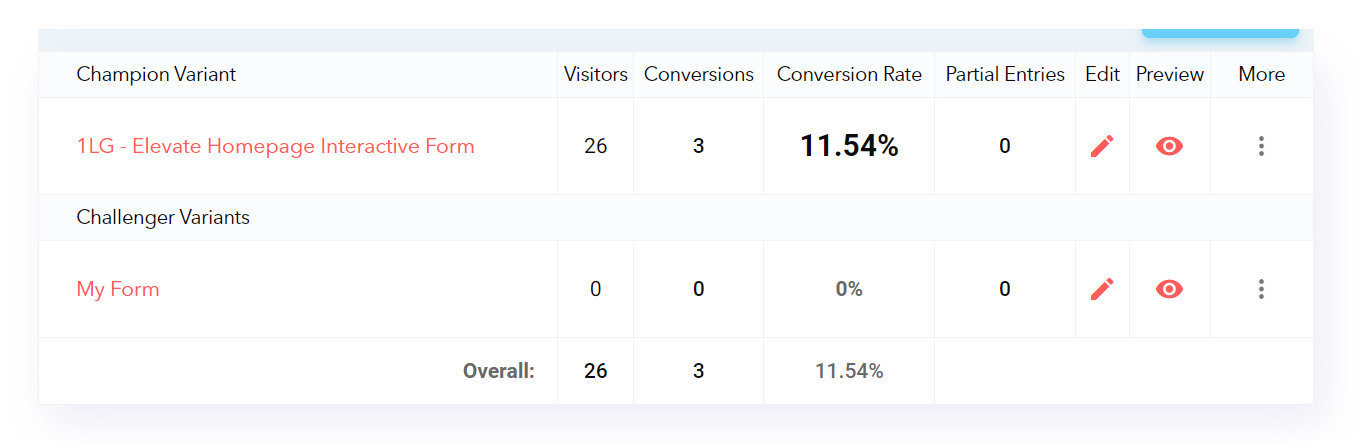

c. Create one "champion" variant and compare it to the current "control" status quo currently

For comparison, it's usually ideal to only develop one new variant based on your hypothesis.

d. Set the sample size and randomization criteria

In order to draw significant results, you must have a big enough sample size of user visits. It is unnecessary to have a Ph.D. in statistics to determine the correct amount. Your A/B testing tool should be able to determine.

While you may go baffling and build a ton of new versions, you actually need to know how modifications perform compared to what you have e level for you.

e. Test, Test, Test

Set your test to run.

Don't look at it until you've collected the required sample size. The most humiliating thing for a conversion rate optimizer is to prematurely declare the outcome of a test, only to discover that the outcome has altered once the sample size is large.

4. Gather the results and evaluate their importance

You can observe how the test went once you've achieved the required sample size! Your first reaction will be to examine the test metric and determine which version "won." But don't get too carried away. You must still assess if the delta difference between the two variations is statistically significant enough to be important. A minor change might not be conclusive.

Again, your A/B testing software should make this clear, or you can validate it for yourself.

5. Make the required changes

The results are now available. What should I do now?

If the results revealed that the challenger variation outperformed the control variant, make the change permanent for all form users.

If the challenger variation outperforms the control, we should make no adjustments.

If the difference is insignificant, you have options to:

a. Make no modifications (since there is no sign of an improvement).

b. Make the modification permanent (there is no sign of a detrimental impact on performance).

c. Re-run the test when it has evolved. For example, if you believe that changing the error message may minimize abandonment but the test results are equivocal, you may wish to attempt a different version of the error copy.

6. Repeat as necessary

You've just finished your A/B test, made the modifications, and informed the rest of the team. Isn't it time to kick back and revel in the reflected brilliance of your relative genius? No?

Obviously not. It's time to go forward with more testing in order to keep improving. Companies with ongoing experimentation programs will typically enjoy the benefits; for example, Bing increases revenue per search by 10-25% each year through experimenting alone. Don't rest on your laurels; instead, plan your next test and launch it.

A/B Testing Goals

A/B testing may reveal a lot about your target audience's behavior and interactions with your marketing campaign.

A/B testing not only helps you discover your audience's behavior, but the outcomes of the tests may also assist you in establishing your future marketing goals.

Here are some of the most typical objectives marketers have for their businesses while A/B testing.

1. Higher Conversion Rate

A/B testing not only helps bring visitors to your website, but it may also assist in enhancing conversion rates.

Changing the placement, color, or even anchor text of your CTAs might affect the amount of visitors that click on them to get to a landing page.

This can improve the amount of individuals that fill out forms on your website, provide you with their contact information, and "convert" into leads.

2. Increased Website Traffic

A/B testing will help you identify the ideal phrasing for your website names so that you can capture your audience's attention.

Trying out alternative blog or web page names might affect the number of individuals who visit your website by clicking on the hyperlinked title. This has the potential to enhance website visitors.

A rise in online traffic is positive! More traffic translates into more sales.

3. The right product images

You know you have the ideal product or service to offer your target market. But how can you know whether you've chosen the correct product image to express what you're offering?

A/B testing may identify which product image better captures the attention of your target audience. Compare the photographs and choose the image with the highest sales rate.

4. Low Bounce Rate

A/B testing might help determine what is directing traffic away from your website. Perhaps the feel of your website does not resonate with your target demographic. Perhaps the colors clash, leaving a foul taste in the mouth of your target audience.

If your website visitors depart (or "bounce") soon after viewing your site, experimenting with alternative blog post openers, typefaces, or prominent photos will help you keep them.

5. Less Cart Abandonment

Customers who leave their website with things in their shopping cart account for 70% of all e-commerce enterprises. This is known as "shopping cart abandonment" and is obviously harmful to any online company.

This desertion percentage may be reduced by experimenting with alternative product photographs, checkout page styles, and even where shipping prices are shown.

Let's look at a checklist for setting up, performing, and assessing an A/B test.

How to Design an A/B Test

Creating an A/B test might appear to be a difficult undertaking at first. But believe us when we say it's easy.

The key to creating a successful A/B test is determining which aspects of your blog, website, or ad campaign can be compared and contrasted with a new or different version.

Before you test every aspect of your marketing plan, review these A/B testing best practices.

Test the right elements

List the aspects that may impact how your target audience interacts with your advertisements or website. Consider which components of your website or marketing campaign have the most effect on a sale or conversion. Make certain that the items you select are suitable and may be adjusted for testing reasons.

In a Google ad campaign, for example, you may test which typefaces or pictures best capture your audience's attention. Alternatively, you may test two pages to see which one keeps people on your website longer.

Choose relevant test items by listing and prioritizing aspects that impact your total sales or lead conversion.

Calculate the sample size

The sample size of an A/B test might have a significant influence on the findings – and this is not always a positive thing. A too-small sample size will distort the results.

Make certain that your sample size is large enough to get reliable findings. Use resources such as a sample size calculator to help you determine the optimal number of interactions or visitors to your website or campaign.

Check your Data

A well-designed split test will produce statistically significant and dependable findings. In other words, your A/B test results are not impacted by randomization or chance. But how can you be certain that your findings are statistically significant and reliable?

Tools for data verification are available, just as they are for establishing sample size.

Using tools like A/B test significance calculators, you can ensure that your data is statistically significant and dependable.

Schedule your Tests

When comparing variables, it's critical to maintain the rest of your controls constant - even when you run your tests.

Choose a comparable duration for both tested parts to ensure the accuracy of your split tests. To achieve the best, most accurate results, run your campaigns at the same time.

Choose a timeframe during which you may expect comparable traffic to both halves of your split test.

Test one element at a time

Don't test many components at the same time. A good A/B test will focus on only one aspect at a time.

Each aspect of your website or ad campaign might have a major influence on the behavior of your target audience. That is why, while doing A/B testing, it is critical to focus on only one aspect at a time.

Trying to test many items in the same A/B test will provide untrustworthy results. You won't know which factor had the most influence on customer behavior if the data are inaccurate.

Make sure your split test is only for one aspect of your ad campaign or website.

Analyze the Data

You may understand how your target audience interacts with your campaign and web pages as a marketer. A/B testing may provide a more accurate picture of how customers interact with your websites.

After the testing is over, spend some time extensively analyzing the results. It might startle you to learn that what you thought was working for your campaigns is actually less successful than you expected.

Data that is accurate and dependable may convey a different tale than you expected. Use the data to help you plan or alter your marketing.

How to A/B Test your Forms with the LeadGen App

The simplest method for setting up lead-generating form conversion testing:

1. Create First Variant

Create a Leadgen form, pick a template and design, and then embed it on your page.

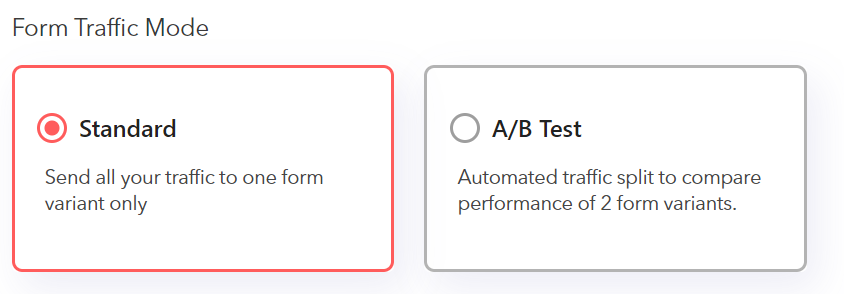

2. Create your Second Variant

Click on the A/B test form mode and clone your form or create a form from scratch that it different from your first form variant.

3. Start the Experiment

You can now A/B test your form in just a few clicks.

After the A/B Test

Finally, let's go through what to do following your A/B test:

1. Concentrate on your desired metric

Again, even though you'll be measuring several metrics, focus your analysis on the key objective metric.

For example, if you tested two form versions and picked leads as your key statistic, don't become too focused on click-through rates.

You may notice a high click-through rate but low conversions, in which case you should pick the variation with a lower click-through rate.

2. Using our A/B testing calculator, you can determine the relevance of your results

To discover out, you'll need to run a statistical significance test. Define your test parameters in the pop-up. Give your experiment a unique title and choose the challenger varieties you wish to employ to compete with your current champion variant.

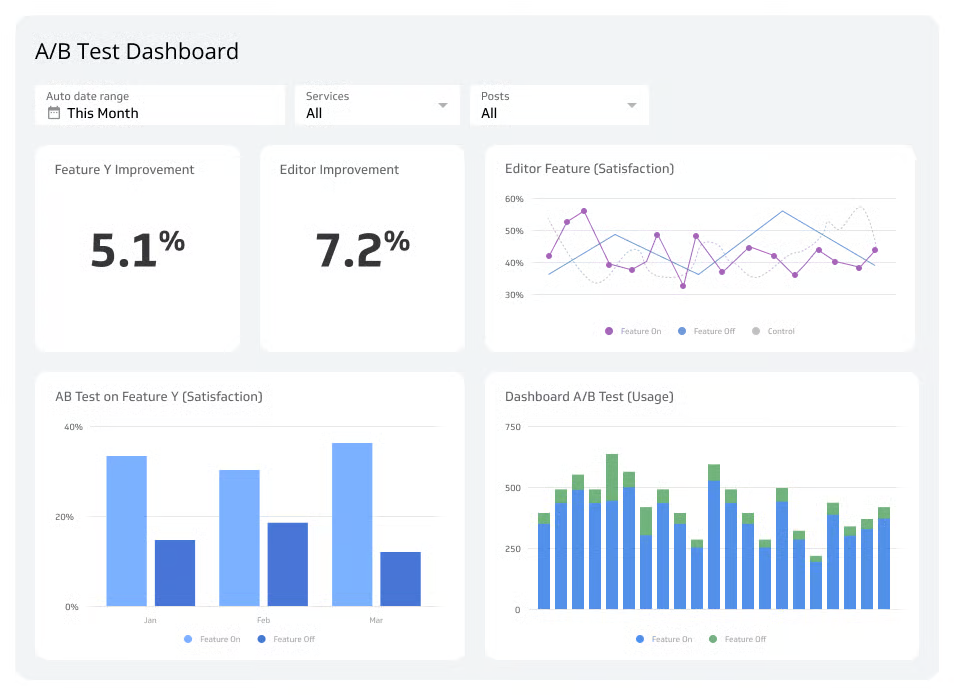

Finally, once you've defined the options, click "Start experiment" to launch your AB test. LeadGen will then dynamically split traffic on your embed form between the two forms.

Your experiment is now active, and you can access the tracking dashboard at any moment by visiting the experiments area of the form overview page.

3. Take action based on your results

If one variant outperforms the other statistically, you have a winner. Finish your test by turning off the losing variant in your A/B testing instrument.

Failed data may assist you in figuring out a fresh iteration on your new a/b test.

If neither variation is significant, the variable you examined had no effect on the results, then you must label the test as inconclusive. In this situation, either continue with the original variant or conduct another test.

4. Plan your next A/B Test

The A/B test you just completed may have helped you uncover a new approach to improve the effectiveness of your marketing material — but don't stop there. There is always space for improvement.

You may even do an A/B test on another aspect of the same form that you just tested.

For example, if you recently tested a headline on an online form, why not test body text as well? Or perhaps a color scheme? Or perhaps images? Always be on the lookout for strategies to improve conversion rates and lead generation.

FAQ

1. How do you plan for an A/B test?

Planning an A/B test involves a systematic approach to designing and conducting the test to ensure accurate and actionable results. Here's a step-by-step guide on how to plan an A/B test:

a. Define Your Objective: Clearly outline the goal of the A/B test. Identify the specific metric or key performance indicator (KPI) you want to improve or measure through the test.

b. Choose a Variable to Test: Decide on the element you want to test, known as the "variable." This could be anything from the layout, color, text, or call-to-action on a webpage or email.

c. Create Hypotheses: Formulate hypotheses about how changing the variable may impact the desired outcome. These hypotheses will guide the design of your test variations.

d. Determine Sample Size: Calculate the required sample size to achieve statistical significance. A larger sample size will yield more reliable results.

e. Randomize Participants: Randomly divide your audience into two or more groups (A, B, etc.). Ensure that participants are randomly assigned to each variation to avoid bias.

f. Develop Variations: Create different versions (A and B) of your test element based on the hypotheses. Keep all other elements consistent between variations to isolate the effect of the tested variable.

g. Set Test Duration: Determine the duration of the A/B test. It should be long enough to collect sufficient data but not too long to avoid external factors influencing the results.

h. Implement Tracking: Set up tracking tools or software to monitor the performance of each variation. This could involve using A/B testing software, analytics platforms, or custom tracking codes.

i. Run the Test: Launch the A/B test and ensure that all participants are exposed to their assigned variation.

j. Monitor Results: Continuously monitor the performance of each variation throughout the test duration. Collect relevant data and measure the impact on the chosen metric.

k. Analyze Results: Once the test is complete, analyze the data to determine which variation performed better in achieving the desired objective.

l. Draw Conclusions: Based on the results, draw conclusions about the effectiveness of the tested variable in improving the desired outcome.

m. Implement Winning Variation: If one variation significantly outperforms the others, implement the winning variation permanently to optimize the desired metric.

n. Iterate and Repeat: Use the insights gained from the A/B test to inform future experiments and continuously improve your website, emails, or marketing strategies.

Remember, A/B testing is an iterative process, and the insights gained from each test can help inform and improve future tests to drive better results and optimize your digital assets effectively.

2. What is A/B testing?

A/B testing, also known as split testing, is a method of comparing two or more variations of a web page, email, advertisement, or other digital content to determine which version performs better in achieving a specific goal or objective. It is a powerful optimization technique used by businesses and marketers to make data-driven decisions and improve the effectiveness of their digital assets.

In A/B testing, two or more variations, typically labeled as A, B, C, etc., are created, with only one element (e.g., design, copy, call-to-action) being different between them. The variations are then presented to randomly divided audiences, and their performance is measured using specific metrics or key performance indicators (KPIs).

The primary goal of A/B testing is to identify which variation leads to higher user engagement, conversion rates, or other desired actions. By comparing the results, businesses can make informed decisions on which version is more effective and implement the winning variation to maximize the desired outcome.

17. Why A/B Testing Matters?

a. Data-Driven Decision Making: A/B testing allows businesses to move away from assumptions and gut feelings, instead relying on concrete data and insights to make informed decisions about their digital content.

b. Optimization: By continuously testing and optimizing different elements of digital assets, businesses can identify the most effective versions that resonate best with their target audience.

c. Improving User Experience: A/B testing helps businesses understand what design and content elements result in better user experiences, leading to increased engagement and customer satisfaction.

d. Conversion Rate Optimization: For e-commerce websites or lead generation pages, A/B testing can significantly improve conversion rates, leading to increased revenue and growth.

e. Cost-Effectiveness: A/B testing allows businesses to make incremental improvements over time, avoiding expensive overhauls that might not yield the desired results.

f. Tailoring Content: A/B testing helps businesses tailor their content to the preferences of their audience, resulting in content that is more relevant and compelling.

g. Learning from Failures: A/B testing helps businesses learn from failures and what doesn't work, leading to better strategies and avoiding repeating unsuccessful experiments.

h. Insights into Audience Behavior: A/B testing provides valuable insights into how users interact with digital content, leading to a better understanding of audience behavior and preferences.

In conclusion, A/B testing is a crucial practice for businesses and marketers to optimize their digital assets, increase engagement, and conversions, and ultimately drive business growth. It enables data-driven decision-making and continuous improvement, ensuring that businesses deliver the best possible experience to their audience.

4. What are the benefits of conducting A/B tests for my website or marketing campaigns?

Conducting A/B tests for your website or marketing campaigns offers a range of benefits that can significantly improve your overall performance and drive business growth. Some of the key benefits include:

a. Data-Driven Decision Making: A/B testing allows you to make decisions based on concrete data rather than assumptions or gut feelings. It provides empirical evidence of what works and what doesn't, guiding you to optimize your strategies.

b. Increased Conversion Rates: A/B testing helps identify the most effective variations that lead to higher conversion rates. By implementing winning variations, you can attract more leads, customers, and revenue.

c. Improved User Experience: Testing different elements on your website or in marketing materials helps you understand what resonates best with your audience, leading to an enhanced user experience and increased engagement.

d. Higher ROI: A/B testing allows you to invest resources more efficiently by focusing on elements that drive the best results. This optimization often translates into higher returns on your marketing investments.

e. Better Content Performance: By testing different content variations, you can determine which messages, images, or videos are most compelling to your audience, resulting in more impactful and engaging content.

f. Reduced Bounce Rates: Optimizing your website through A/B testing can lower bounce rates by providing visitors with more relevant and engaging content, encouraging them to stay on your site longer.

g. Enhanced Customer Insights: A/B testing can provide valuable insights into customer behavior, preferences, and pain points. Understanding your customers better allows you to tailor your offerings and marketing efforts to meet their needs.

h. Competitor Advantage: Constantly testing and improving your website or marketing campaigns gives you a competitive edge. Staying ahead of competitors ensures you are continuously providing the best user experience.

i. Cost-Effectiveness: A/B testing allows you to make incremental improvements over time, avoiding costly overhauls that might not yield the desired results.

j. Continuous Optimization: A/B testing fosters a culture of continuous improvement and experimentation. Regularly optimizing your strategies helps you stay relevant and adapt to changing market dynamics.

k. Informed Marketing Strategies: Insights gained from A/B testing can inform your overall marketing strategies, ensuring that you allocate resources where they have the most impact.

l. Empirical Validation: A/B testing provides empirical validation of your marketing hypotheses, giving you confidence in your marketing decisions.

A/B testing empowers businesses to make data-driven decisions, improve conversion rates, enhance user experience, and optimize marketing efforts. By continuously testing and iterating, you can achieve meaningful improvements and remain competitive in your industry.

5. How long should I run an A/B test to get reliable results?

The duration of an A/B test depends on several factors, including the size of your audience, the level of traffic or engagement, and the magnitude of the expected change in user behavior. To get reliable and statistically significant results, it's essential to run the test for an adequate period. Here are some considerations to determine the duration:

a. Sample Size: The larger your sample size (number of visitors or participants), the quicker you can reach statistical significance. A larger sample size reduces the margin of error and allows for more reliable results.

b. Expected Effect Size: If you expect a significant change in user behavior because of the tested variation, you might need a shorter test duration to detect the impact. Conversely, if you expect a subtle effect, a longer test duration may be necessary.

c. Baseline Conversion Rate: The higher your baseline conversion rate, the shorter the test duration needed to detect a statistically significant difference. For lower baseline conversion rates, more time might be required.

d. Minimum Run Time: Industry experts recommend running an A/B test for a minimum of two to four weeks. This ensures you capture different days of the week and potential weekly or monthly variations in user behavior.

e. Variability in User Behavior: If user behavior varies significantly throughout the week or month, it's crucial to run the test for a duration that includes different patterns.

f. Time-Dependent Metrics: Some metrics may have seasonality or time-dependent trends. In such cases, you might need to run the test for a longer period to observe changes across different timeframes.

g. Traffic Volume: A/B tests with high traffic volumes can reach statistical significance faster than tests with lower traffic volumes.

h. Statistically Significant Results: Aim for a statistically significant result with a confidence level of at least 95%. This means you can be 95% confident that the observed differences are not because of random chance.

i. Monitoring Results: Continuously monitor the results throughout the test duration to assess if any significant trends or patterns emerge early on. If the results become conclusive before the intended test duration, you can end the test early.

It's essential to balance running the test long enough to gather reliable data and achieve results in a timely manner. By considering these factors and using statistical significance calculators or A/B testing tools, you can determine the appropriate test duration for your specific experiment.

6. What tools or software can I use for A/B testing?

There are many tools and software available for conducting A/B testing, ranging from simple and user-friendly solutions to more advanced platforms with robust features. Here are some popular A/B testing tools widely used by businesses and marketers:

i. Google Optimize: A free and easy-to-use A/B testing tool offered by Google. It integrates seamlessly with Google Analytics, making it convenient for users already using the Google ecosystem.

ii. LeadGen App: Increase lead conversions by using online form testing in the LeadGen App. Forms are the primary interface that converts visitors into leads. A/B testing your lead forms will allow you to increase conversion rates indefinitely without modifying a single part of your website. Conversion testing for the LeadGen App is entirely automated: Simply replicate your champion variant, change and create a new variant, and begin the form experiment.

iii. Optimizely: A powerful A/B testing and experimentation platform that caters to both small businesses and enterprise-level organizations. It offers a wide range of features, including advanced targeting and personalization options.

iv. Crazy Egg: This tool focuses on visual analytics, offering heatmaps and user recordings to understand user behavior and optimize websites effectively.

v. AB Tasty: A user-friendly platform that provides A/B testing, personalization, and customer experience optimization features. Suitable for both small businesses and larger enterprises.

vi. Convert: An A/B testing and personalization tool with a user-friendly interface. It offers advanced features, including multi-page experiments and custom targeting.

vii. Unbounce: Primarily known as a landing page builder, Unbounce also includes A/B testing capabilities, allowing users to optimize their landing pages for better conversion rates.

When selecting an A/B testing tool, consider factors such as ease of use, integration with other marketing tools, the number of visitors or tests allowed in your plan, reporting capabilities, and customer support. Some tools offer free trials or limited versions, allowing you to test their features before committing to a paid plan.

Choose the tool that best suits your needs and budget while providing the functionality required for your A/B testing goals.

7. Can I run A/B tests on different devices or platforms, such as mobile or desktop?

Yes, you can and should run A/B tests on different devices or platforms, such as mobile or desktop. A/B testing is not limited to a specific device or platform; it is a versatile method that can be applied across various digital touchpoints to optimize user experiences and conversions.

Running A/B tests on different devices is crucial because user behavior and preferences can vary significantly between mobile and desktop users.

By conducting A/B tests on different devices and platforms, you can gather insights into user preferences and behaviors, allowing you to tailor your digital experiences to meet their needs. Ultimately, this approach leads to higher conversions, better user engagement, and improved overall performance across all devices.

8. How do I deal with inconclusive results or when there is no significant difference between variations?

Dealing with inconclusive results or when there is no significant difference between variations in an A/B test can be challenging. While inconclusive results are common in A/B testing, it is essential to approach them with a systematic and analytical mindset.

Remember that inconclusive results are a natural part of the A/B testing process. The key is to use these results as learning opportunities, refine your testing strategy, and continue experimenting to optimize your digital experiences effectively.

Final Thoughts

Identifying and correcting the "big" issues that are causing your users to abandon needlessly and negatively affecting your conversion rate is frequently the first step in optimizing your online forms or checkouts. Form builder tools like LeadGen App are excellent for quickly identifying and resolving issues.

Many businesses will rub their hands, proclaim "job done," and move on to the next item on their problem list at this point. This is a squandered chance. Even if the primary issues in a form have been addressed, there is still enormous potential for conversion rate enhancement through a properly executed experiment or A/B testing campaign.

Hopefully, the preceding has walked you through the tough process of getting your A/B testing process up and running, as well as convinced you of its benefits. Happy Testing!